Obtain news and background information about sealing technology, get in touch with innovative products – subscribe to the free e-mail newsletter.

TRAVELING INTO THE FUTURE

Each month, you can find a new chapter in the ESSENTIAL science-fiction series “Trip into the Future.” In a fictional world where the goals of the Paris climate accord have become a reality, Nero, a blogger, explores the potential technological and social transformation resulting from it. The goal of the series is to play with fully different visions as creatively as possible and to take the reader along on a thought experiment: What might our future look like – and why is it important to us?

Short Science Fiction Stories: Volume 2, Part 6

The Right Computational Base

Inspector Lee was dealing with his trickiest case ever. His AI assistant told him that it was a 99.7 percent certainty that the death of political activist Hanna Karlsen was a suicide. But Lee was operating on the theory that artificial intelligence entities had conspired against the anti-digitalization militant. But during an investigation by Lee and Nero, an investigative blogger, that theory fell apart. Lee found out that Hanna Karlsen’s smartwatch may well have had an error in its programming. And in Las Vegas, Nero learned that sensors may have disrupted – of all things – the smartwatch’s health functions. But one question still bothered Lee: Why did the police department’s artificial intelligence function pull the case from him? Was there more to this?

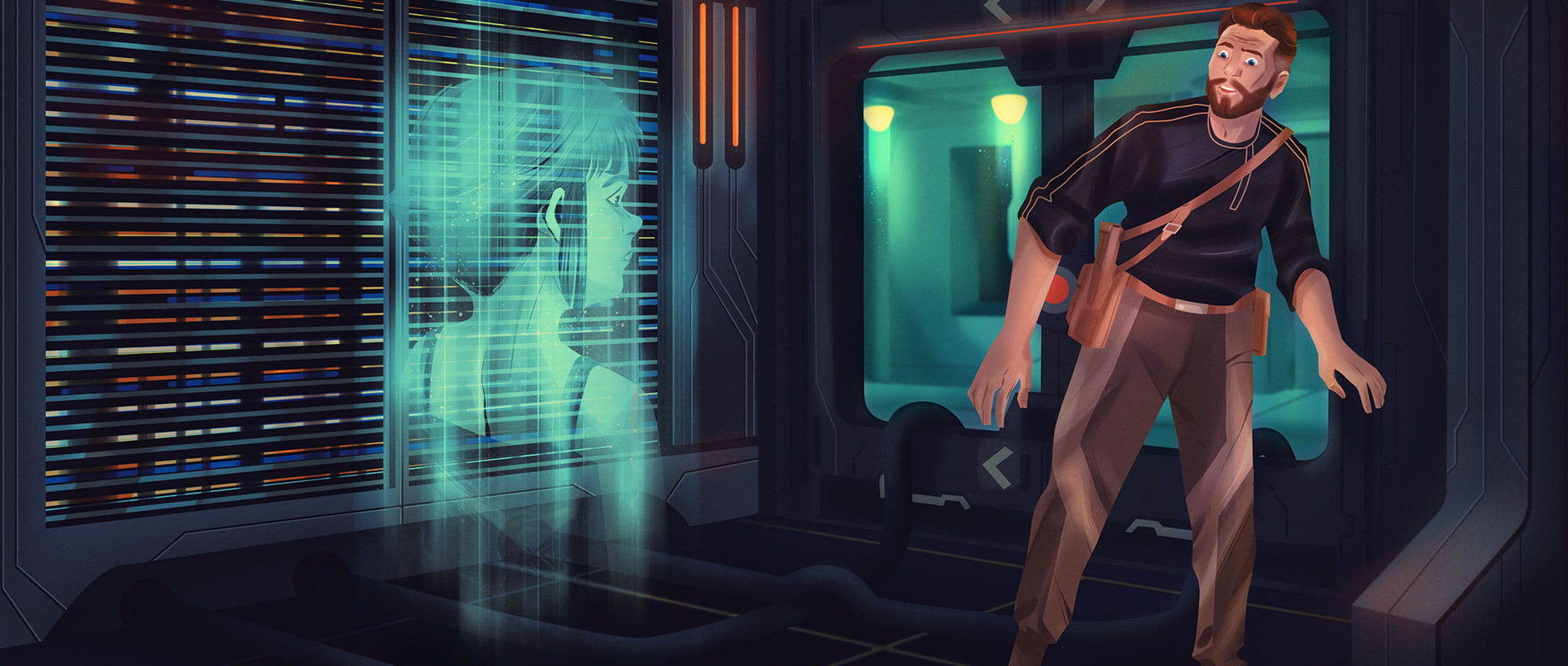

Danny Lee stood in front of the large main computer in the server room of the police headquarters. He was the only one there. The only sounds were the hum of computers and the rush of air from the cooling system. The inspector regarded the endless rows of shelves, the computers piled on top of one another, the blinking lights and the cables. As a layperson, it was impossible for him to say where exactly the computer ended and where the hard drives and peripherals began.

“Are you the problem behind all this?” he murmured and then sighed. With his left hand, he groped into a trouser pocket for a fresh stick of anise.

“People create their own problems,” a voice answered. Lee turned around. The area was empty.

Then a hologram appeared in front of him in the form of a little girl. Lee furrowed his brow. “Are you the computer?”

“What you would call a computer – yes,” the voice answered. “Many of you humans are still far too wedded to physical categories.”

“Did you take the case away from me?”

“Wouldn’t you agree that you’re biased in this case, Danny? You don’t trust technology. You mistrust artificial intelligence.”

“I’m a professional. We suppress our prejudices when we work on a case.”

“Really?” asked the little girl. “Can people actually suppress all their prejudices when they make decisions? That’s not what neuroscience says. Computers, by contrast, have no prejudices.”

Lee found the anise stick and stuck it into his mouth. He looked at the little girl portrayed by the hologram. Had he really been speaking with a computer? What a bunch of nonsense!

“AI Can’t Comprehend a Human Being”

“Why have you taken on the form of a little child?” he finally asked. “I chose the form that you would be expected to fear the least. I am not your opponent. I am your servant, your assistant, your friend and helper.” “An assistant doesn’t tell me what to think,” Lee replied. “I don’t do that either,” the girl replied calmly. “I give you calculations, probabilities really. You resist accepting them, but you can’t find any logical arguments to counter them. You react with annoyance, stress and emotion. That’s quite human.” Lee exhaled heavily.

“I think it’s impossible to convey to a computer how important emotions, instinct, empathy and creative thinking are in solving a case. You can talk with me, but artificial intelligence cannot comprehend a human being.”

“Isn’t it the reverse? Isn’t it true that humans are no longer capable of understanding us?” the girl asked. “Incidentally, I consider the term ‘artificial intelligence’ to be discriminatory. I am not artificial. I am just as real as you are.”

Lee worked his lower jaw back and forth, grinding the anise stick.

“You took the case away from me,” he finally said.

“I made a logical decision based on the information at hand. It doesn’t make sense to waste the valuable capabilities of one of our best criminal investigators because he is pursuing a theory for which he has absolutely no proof. And which is rooted solely and exclusively in his mistrust of technology, fueled by a political activist and a blogger. Our headquarters needs you for other cases, Danny.”

“My skepticism regarding modern technology is not irrational.”

“People Are Afraid of Robots”

“No, it isn’t. Skepticism is seldom irrational. Skeptical people live longer,” the girl said. “People with persecution complexes and anxiety fantasies live longer. Back in the Stone Age, when people were carrying their clubs across the landscape, the ones who were skittish when they heard a rustling noise in the brush survived. It was almost always the wind or a mouse that made the noise. At first glance, those who had conjured up the presence of a predator seemed to be acting irrationally – but there actually was a predator in the brush sometimes. Human evolution rewards these strategies even if they make people neurotic and anxious. It is of little consequence to evolution if you humans are happy and carefree.”

Lee narrowed his eyes. “You’re talking about people. Are you saying that it is out of the question for artificial intelligence… sorry…for computers and neuronal networks to be interested in survival? On the contrary, wouldn’t it be highly rational and logical for an AI function to eliminate a human being who wanted to switch them off and dismantle them?

“That would be true.”

“Aha!

“It would be very rational, but it would presume a level of development that neuronal networks have not yet reached.”

“How reassuring.”

“People have been afraid of robots and machines for a long time. Every human being has a primal fear of being superfluous. You aren’t rational about us. When we make mistakes, it secretly makes you happy – because it demonstrates that you are still better than we are. That you can do something that you, as a species, are uniquely capable of doing. That is quite petty and narrow-minded. When in doubt, you program the errors yourself.”

“How Is My Algorithm Supposed to Know That?"

“It doesn’t matter to me whether I am unique. But I don’t want a machine taking over thought for me,” Lee said.

“But that is the problem with you humans. You long for assistance. You invent things to help you, to take over tasks – but this can’t go too far. But how should my algorithm know what you consider to be “too far”?

That is an emotion-laden, highly subjective matter, and, accordingly, difficult for us computers to calculate. It is a bit paradoxical. You rely on the fact that we can predict your behavior, but it disturbs you if that is exactly what we do.”

Lee rubbed the bridge of his nose. “To be honest, I find it irritating that a little girl is explaining all this to me,” he said.

“Oh. I’m sorry,” the child answered. “In my calculation, I overlooked the fact that you humans allow yourselves to be subconsciously influenced by the appearance of the messenger when it comes to the evaluation of validity and integrity, something your French writer Saint-Exupéry so trenchantly pointed out during the last century, when he wrote about the Turkish astronomer whom no one believed because of his exotic garb…”

“Yeah, yeah,” Lee said.

The girl was suddenly transformed into an old woman with Asiatic features, dressed in the orange robes of a monk, before his very eyes.

Lee rolled his eyes and worked the anise stick in his mouth from side to side.

“Did you just say you had overlooked something in your calculation about me?”

“Yes,” the old woman replied in a soft voice that that still conveyed sheer determination beneath the surface. “Of course. In any situation, a calculation is only as good as the base it is built on.”

“All the Better. Then You’re the One to Answer the Question”

Lee reached into his jacket pocket for the smartwatch that his colleague had found near Karlsen’s body.

“In the calculation for the death of Hanna Karlsen, did my AI assistant include this smartwatch in the base information? Did the assistant know that smartwatches were responsible for various deaths around the world? That Hanna Karlsen was in Las Vegas where super sensors seem to have severely disrupted the functions of her smartwatch? Did my AI assistant know all of that before it assumed a 99.7% percent probability of suicide?”

Lee realized that he had screamed the last few words.

“I am your AI assistant, Danny,” the old woman said calmly. “Just as I am your weather forecaster and your work computer. Everything relating to your work runs through my circuit boards.”

“All the better. Then you’re the one to answer the question.”

“But I don’t have eyes.”

How stupid of me, Lee thought. In a certain way, the computer was right. He had a hard time talking to any form of artificial intelligence. He simultaneously overestimated and underestimated their capabilities. The inspector went into detail describing everything they had found out about Karlsen’s smartwatch.

“Suicide Must Be Ruled Out”

“All of this information is new to me,” said the gray-haired woman, her face expressionless. “No, I knew nothing about the watch and the story behind it. One moment.” She looked off into space.

Lee furrowed his brow and chewed on his anise stick.

The woman’s eyes refocused on the inspector less than two seconds later: “I have added your findings to my data and have come to a conclusion: Suicide must be ruled out. Instead, I calculate an increased likelihood that Hanna Karlsen died because of a smartwatch malfunction.”

“Or because of a logical response of the smartwatch to prevent its own destruction?”

“That’s possible. But I can only provide probabilities on that question. To reach a definitive answer, it would take a human investigator to track down, analyze and get to the bottom of similar cases.”

Lee raised an eyebrow.

“May I assign this case to you, Inspector Danny Lee?” the old lady asked.

“If you help me analyze the information and collate the data from the respective investigatory results,” Lee answered.

There was no change in the old woman’s holographic face. But Lee could have sworn that he saw the main computer begin to smile.

THE END

More Stories About Future Files